Dr Gordon Wetzstein – A Human-centric Approach to Near-eye Display Engineering

Virtual and augmented reality technologies are now rapidly gaining traction in our society. Yet even as they improve, these devices continue to face major challenges relating to the wide variability of human vision. In their research, Dr Gordon Wetzstein and his colleagues at Stanford University explore innovative new ways to overcome these challenges, through the latest advances in both optics and vision science. In demonstrating ground-breaking innovations to near-eye displays and sensors, the team’s work could soon bring enormous benefits to users spanning a diverse spectrum of visual ability.

Near-eye Displays

Virtual reality is among the most captivating technologies available today – enabling users to view and manipulate virtual 3D scenes through the movements of their eyes and bodies. As its capabilities rapidly improve, this technology is now offering an increasingly abundant range of applications: from allowing us to explore virtual worlds in video games, to helping architects and city planners to better visualise their designs.

One crucial component of virtual reality hardware is the near-eye display, which provides the interface between a user, and the programs they interact with. These devices are cutting-edge in themselves, but still have one major hurdle to overcome before their widespread use can become cemented in our society. The problem relates to the widely varying capabilities of our eyes.

The human eye is a key element of virtual reality technologies – receiving all of the optical waves produced by near-eye displays so that our brains can interpret them as 3D images. However, it is also the one element of the overall system that designers of the systems ultimately have no control over. Naturally, the focusing responses of human eyes vary significantly between different people. Therefore, as researchers develop more realistic user experiences in near-eye displays, it is critical to consider how these diverse focusing responses can be recreated within the displays themselves.

Artificial Focusing

As light emanates from a specific point on an object, it will travel in many different directions. In order for us to properly focus on objects, this light must re-converge at a specific point on the retina, allowing our brains to reconstruct an image of the original object. This is made possible by the eye’s crystalline lens, which uses the property of refraction to bend the paths of optical waves entering the pupil in different directions: focusing them onto a single spot on the retina. As objects become closer, the angles of the waves entering the eye become sharper, and require higher degrees of refraction to remain focused on the retina. To account for this, circular muscles surrounding the lens will contract – changing the shape of the lens to increase the refraction of the light passing through it.

However, when viewing a near-eye display, our eyes will no longer do this automatically, because the user perceives the image as a 2D plane floating in front of their eyes rather than an object in a 3D environment. Instead, natural focusing responses must be artificially rendered in the display. If this isn’t done correctly, a user’s brain will not be able to interpret displayed images as intended – diminishing the comfort of their viewing experience.

Circumventing a Conflict

Through a study published in 2015, Dr Wetzstein and his colleagues developed a technique to alleviate the nausea and discomfort commonly experienced by users of current virtual reality systems. The problem stems from a conflict between the simultaneous, opposite movements of both eyes to obtain single 3D images, and changes in the shape of the eye’s lens to stay clearly focused images of moving objects. It arises in all 3D displays, and must be overcome in order for users to comfortably perceive objects at varying virtual distances.

To solve the issue, Dr Wetzstein’s team combined the established principles of artificial focus rendering with emerging principles of ‘light field’ technology, in which light from a single source travels in multiple different directions. In their study, light fields were produced by two different displays: each displaying the 3D image from a slightly different perspective. Since each display was only slightly larger than the size of a pupil, and projected the image at a range of viewing angles, a separate focusing response could be presented to each eye independently. Ultimately, the innovation enabled high-resolution images, while significantly improving the user’s viewing comfort.

Tracking a User’s Gaze

One alternative technique for achieving reliable focus rendering involves ‘gaze tracking’, in which sensors built into near-eye displays continually monitor the direction in which a user is looking. So far, the technology has been held back both by its high-power requirements, and the long delay times in the images produced. This has meant that image rendering was too slow to capture the rapid, subtle movements of our eyes.

In a recent study, Dr Wetzstein and his colleagues proposed a more sophisticated system, which is about 100 times faster both in capturing eye movements, and rendering images. They achieved this through a unique combination of emerging ultra-fast sensors and novel algorithms that can estimate in real time where a user’s gaze will point next, allowing displays to be updated as rapidly as 10,000 times per second – far more quickly than even our most rapid eye motions can detect. After demonstrating their ultra-fast gaze tracking devices on real users, the researchers demonstrated highly accurate images over wide fields of view, paving the way for their widespread use in virtual reality.

Variability in Vision

In most young people, lenses can seamlessly adjust themselves as they look around at objects in the real world. As we age, however, the material structure of the lens becomes stiffer, meaning we gradually lose the ability to easily focus on nearby objects, resulting in a condition known as ‘presbyopia’. This ultimately means that the focusing responses of humans as a whole are extremely varied.

When viewing near-eye displays, all users will perceive images at a single distance. However, problems arise for younger people, who can more readily notice differences between their natural focusing responses, and those they perceive in near-eye displays. Until recently, this diversity in vision was extremely difficult to accommodate. Dr Wetzstein addresses the issue both for near-eye displays, and for eyeglasses that correct for vision impairment.

Improving Experiences for Younger Users

In parallel with gaze tracking technology, other studies have worked towards ‘varifocal’ displays, which physically alter the distance between the eye and a display. These displays incorporate ‘focus-tuneable lenses’ that can mimic the function of the lenses in our own eyes, by changing their shapes to alter their refractive properties.

In each of these cases, the perceived distance between the eye and a display can be readily altered, enabling devices to reproduce far more realistic focusing responses for younger users. Crucially, both approaches can be readily combined with gaze tracking technology to alter the distance between the eye and a display, depending on the part of the image a user is looking at.

One principal advantage of this approach is that it enables the opposite motions of both eyes required for viewing 3D images to be directly coupled with the reshaping of both lenses, instead of being in conflict with it. This removes the previous conflict between both actions, while maintaining the use of gaze tracking. In addition, varifocal displays based on focus-tuneable lenses enable the focusing responses recreated in the images to be precisely tailored to the needs of specific users. This ability offered a breakthrough in the accessibility of near-eye displays for younger users.

Introducing: Autofocals

Dr Wetzstein and his colleagues have developed novel electronic eyeglasses named ‘autofocals’, which combines gaze tracking software with a depth sensor to drive focus-tuneable lenses automatically. This approach enables the devices to automatically correct for presbyopia in older users by externally mimicking the response of a healthy, non-stiffened lens. Furthermore, the level of this automatic adjustment can be tailored to the degree of presbyopia in specific users, significantly improving its accessibility.

To analyse the performance of their design, Dr Wetzstein’s team tested it on 19 volunteers – each with varying levels of presbyopia. After completing a visual task, the participants found that the autofocals greatly improved the visual sharpness of 3D images compared with their own vision, and also reported an ultra-fast, highly accurate refocusing response.

Furthermore, the low power requirements of the apparatus, combined with its ability to function in diverse situations without the need for calibration, could soon allow the researchers to integrate their autofocals with small, wearable devices. If achieved, this may lead to a whole new generation of eyeglasses, which would improve the lives of many millions of vision-impaired people globally.

Branching into Holography

Following on from these achievements, the next steps of Dr Wetzstein’s research involve holographic near-eye displays. Holography is now a rapidly growing field of research that exploits the principles of light diffraction – where waves spread out after passing through narrow gaps, to enable 3D images to be viewed from multiple different angles. If projected from near-eye displays, they can allow users to perceive these images without any need for special eyeglasses or external equipment. Currently, however, the technology faces numerous challenges in achieving both high image quality, and real-time performance.

By drawing on the achievements they have made so far, and combining them with the latest advances in artificial intelligence, optics and human vision science, Dr Wetzstein’s team is now addressing these challenges in a variety of innovative new ways. Their work could soon pave the way for the widespread use of holography in a new generation of virtual reality displays.

A New Era for Near-eye Displays

Ultimately, Dr Wetzstein and his colleagues hope that the computational near-eye display technologies they develop could bring the widespread application of virtual and augmented reality in our everyday lives one step closer. If achieved, this could open up numerous new avenues of research.

The primary interface between a user and the computer software run by near-eye displays will be crucial in ensuring comfortable viewing experiences for all users. Through the use of these emerging technologies, designers of virtual reality systems will not only achieve this level of comfort, but will also ensure that the technology becomes accessible for all users, regardless of their visual ability.

The team will now continue to draw upon advances in optics, human vision science, and other cutting-edge fields to improve the human-centred capabilities of near-eye displays even further.

Reference

https://doi.org/10.33548/SCIENTIA645

Meet the researcher

Dr Gordon Wetzstein

Electrical Engineering Department

Stanford University

Stanford, CA

USA

Dr Wetzstein completed his PhD in Computer Science at the University of British Columbia in 2011. He has now been an Assistant Professor at Stanford’s Electrical Engineering Department since 2014, where he also leads the Stanford Computational Imaging Lab. Dr Wetzstein’s research interests combine the latest advances in computer graphics, computational optics, and applied vision science, which he applies in applications as wide-ranging as next-generation imaging, wearable electronics, and microscopy systems. His groundbreaking research has now earned him awards including the 2019 Presidential Early Career Award for Scientists and Engineers (PECASE), the 2018 ACM SIGGRAPH Significant New Researcher Award, a Sloan Fellowship in 2018 and an NSF CAREER Award in 2016.

CONTACT

E: gordon.wetzstein@stanford.edu

W: http://stanford.edu/~gordonwz/

W: http://www.computationalimaging.org/

KEY COLLABORATORS

Yifang (Evan) Peng, Stanford University

Suyeon Choi, Stanford University

Nitish Padmanaban, Stanford University

Robert Konrad, Stanford University

Fu-Chung Huang, Stanford University

Manu Gopakumar, Stanford University

Jonghyun Kim, NVIDIA, Stanford University

Emily Cooper, UC Berkeley

FUNDING

Ford

National Science Foundation (awards 1553333 and 1839974)

Sloan Fellowship

Okawa Research Grant

PECASE by the Army Research Office

FURTHER READING

S Choi, J Kim, Y Peng, G Wetzstein, Optimizing image quality for holographic near-eye displays with Michelson holography, Optica, 2021, 7.

Y Peng, S Choi, N Padmanaban, G Wetzstein, Neural holography with camera-in-the-loop training, ACM Transactions on Graphics (TOG), 2020, 39, 185.

N Padmanaban, R Konrad, G Wetzstein, Autofocals: Evaluating gaze-contingent eyeglasses for presbyopes, Science Advances, 2019, 5, eaav6187.

N Padmanaban, R Konrad, T Stramer, EA Cooper, G Wetzstein, Optimizing virtual reality for all users through gaze-contingent and adaptive focus displays, Proceedings of the National Academy of Sciences, 2017, 114, 2183.

N Padmanaban, Y Peng, G Wetzstein, Holographic near-eye displays based on overlap-add stereograms, ACM Transactions on Graphics (TOG), 2019, 38, p.1-13.

F Huang, K Chen, G Wetzstein, The Light Field Stereoscope: Immersive Computer Graphics via Factored Near-Eye Light Field Displays with Focus Cues, ACM SIGGRAPH (Transactions on Graphics 33, 5), 2015.

Want to republish our articles?

We encourage all formats of sharing and republishing of our articles. Whether you want to host on your website, publication or blog, we welcome this. Find out more

Creative Commons Licence

(CC BY 4.0)

This work is licensed under a Creative Commons Attribution 4.0 International License.

What does this mean?

Share: You can copy and redistribute the material in any medium or format

Adapt: You can change, and build upon the material for any purpose, even commercially.

Credit: You must give appropriate credit, provide a link to the license, and indicate if changes were made.

More articles you may like

How Food Environments Shape Our Eating Habits

How we eat dramatically impacts our health, yet millions of Americans live in ‘food deserts’ – areas with limited access to fresh, nutritious food. Recent research reveals that solving this crisis requires looking beyond just physical access to food to understand how our entire community environment shapes our dietary choices. Through a series of pioneering studies, Dr Terrence Thomas and colleagues at North Carolina A&T State University have been investigating how different aspects of our food environment influence what we put on our plates. Their findings suggest that creating lasting change requires reimagining how communities engage with food at every level.

Probing Electron Dynamics in the Ultrafast Regime

In the atoms that make up the matter around us, negatively charged particles called electrons have properties such as spin and orbital angular momentum. Researchers at Martin Luther University Halle-Wittenberg have developed a theoretical framework which allows them to simulate the dynamics of the spin and orbital angular momentum of electrons in materials when probed with an ultrafast laser pulse. Using this framework, they are able to simulate different materials and improve our understanding of dynamics on an atomic scale.

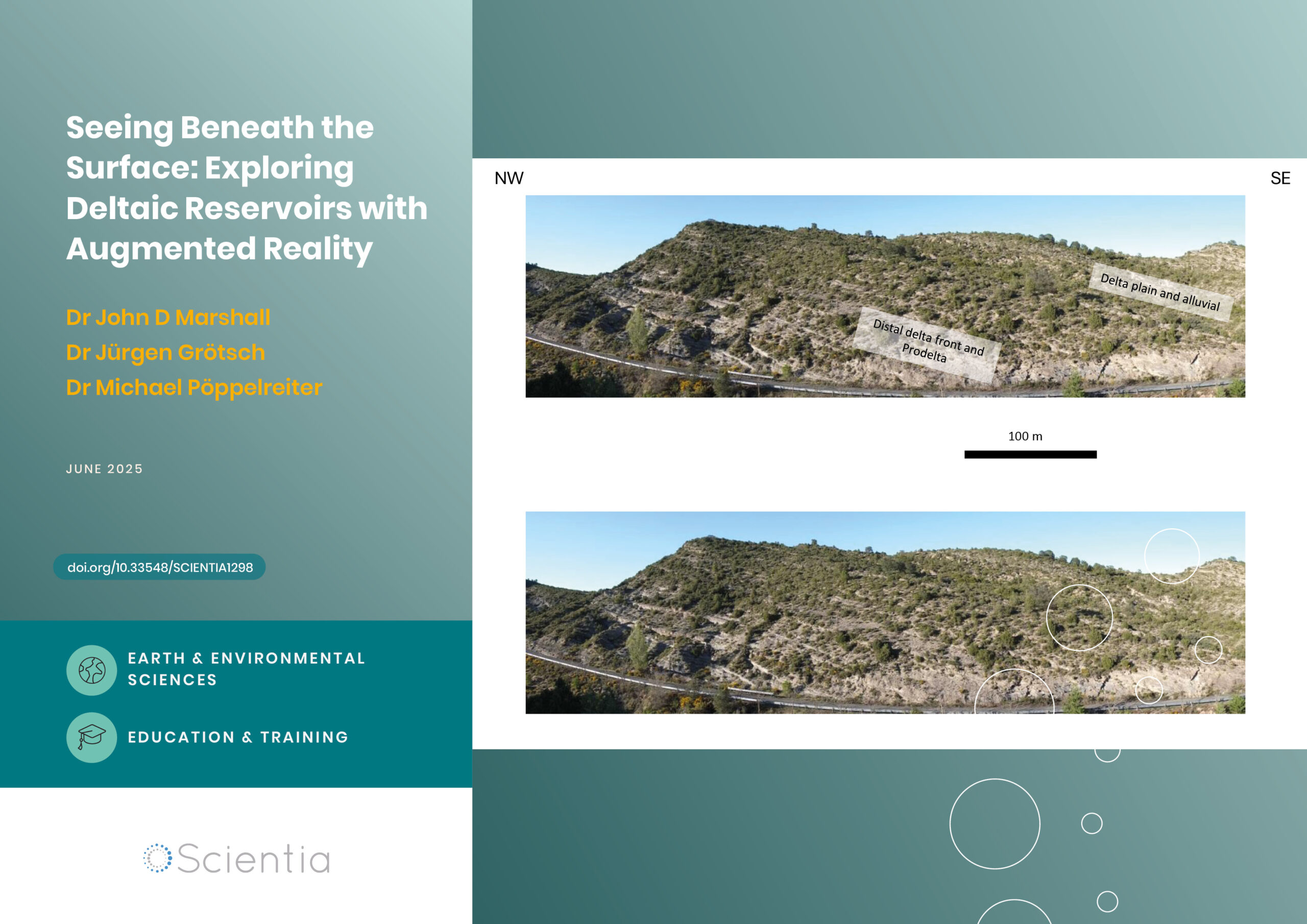

Seeing Beneath the Surface: Exploring Deltaic Reservoirs with Augmented Reality

In the Aínsa Basin of the Spanish Pyrenees, the Mondot-1 well was drilled, cored, and fully logged to capture a detailed record of a long-buried ancient river delta system. Dr. John D. Marshall, Dr. Jürgen Grötsch, and Dr. Michael C. Pöppelreiter with co-workers at Shell International used this core to trace how sediments once flowed across the landscape, and were deposited under shifting tectonic conditions. The team employed augmented reality and interactive virtual displays; these innovative tools offer new ways to explore subsurface depositional systems, and are particularly useful in locations where physical access to the core is difficult, or no longer possible.

Dr Jim Wu | Ziresovir Offers New Hope for Treating Respiratory Syncytial Virus Infections

Respiratory syncytial virus (RSV) causes respiratory tract infections in children and adults. While for many patients the outcomes of infection are mild, for others, infection can prove fatal, and there is a lack of effective treatments. Dr Jim Wu from the Shanghai Ark Biopharmaceutical Company in China carries out his vital research to develop new, safe, and effective treatments to tackle this killer.