Dr Michael D. Bryant | Dr Benito R. Fernández – Mixing Analogue And Digital Computers: The Future is Hybrid

Differential equations are used to model and describe systems across the whole of science and technology. Current computer designs limit our ability to solve very large systems of differential equations. However, a combination of analogue and digital computing might provide a way to massively increase our ability to do this, opening up a new world of scientific and industrial potential. Dr Michael D. Bryant and Dr Benito R. Fernández, along with their colleagues at The University of Texas at Austin, propose to build a computer with mixed analogue and digital components, to achieve a system that can solve very large sets of differential equations.

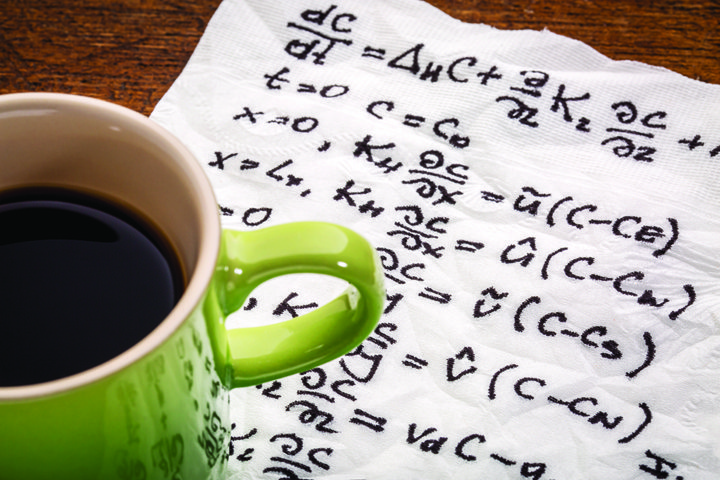

The Joy of Differential Equations

In the world of mathematics, a differential equation is an equation that relates some important variable(s) to the rate of change of that(those) variable(s). The rate of change is not limited to be with respect to time t, but may include other variables – like spatial variables. A simple example is the velocity of a falling object, which is calculated as acceleration due to gravity, multiplied by the amount of time the object has been falling. In science and engineering, differential equations are often vital in describing the behaviour of components of a system.

Take a car, for example, with its hundreds of moving parts. The movement of these parts can be captured and described using differential equations that link their weight, shape and flexibility to the forces moving them, and to their physical characteristics. So a car engine, or even the whole vehicle, could be characterised by thousands of differential equations, many of which are interlinked with one another.

Why is this important? The reason this is a useful way to describe a car is that if you can solve these differential equations, then you can simulate the entire behaviour of the car under any possible set of conditions. You can create a mathematical model of the car, and predict how it will behave, or how it will perform, in any possible situation. If you can do that, then you can identify design problems and solve them without having to spend any money on building and driving expensive prototypes into walls or racing them around test tracks until their engines explode.

This makes testing cheaper and much more reliable, and if this is true of cars then it is also true of oil platforms, fighter aircraft and models of the Earth’s climate. In fact, as long as you don’t delve too deeply into the tiny details of the universe where quantum effects take hold, just about anything can be treated as a set of differential equations to be solved.

So, what exactly does ‘solving’ these differential equations mean? A solution to a set of differential equations is another set of equations that precisely defines the variables we are interested in (i.e. speed) in terms of position or some other variables. The unsolved set of equations contains derivatives (the rate of change of variables X with respect to other variables such as Y, Z, U, and possibly time t) that are not useful for any eventual model, but that are unavoidable at the start of the process. At the end of this process, all that remains are the required variables, which can be calculated in relation to one another and exogenous input variables.

The Problem with Digital Computing

Digital computers are amazing. They can carry out billions of calculations every second, on huge numbers of different variables. Since computers can be programmed to find solutions to sets of equations, can they be used to solve differential equations? And if so, is it simply a case of using more computing power to solve larger sets of these equations?

The answer is no – and yes. The computers we all use are totally reliant on binary operations, which can be used to process numbers with a limited number of digits. They cannot be used to completely mimic real numbers, and so, some rounding or approximation is unavoidable when they are working with continuous variables, such as speed, distance and time. To achieve sufficiently high levels of accuracy when dealing with continuous variables, digital computers need to be as precise as possible and use lots of significant figures for all the variables, including time, since errors will accumulate as the solution evolves over time. When working with thousands or even millions of parallel differential equations, this means a fantastic number of computations and makes it effectively impossible to find even approximate solutions to these sets of equations in acceptable time frames.

The Problem with Analogue Computing

Analogue computers are effectively ‘similar or analogue’ models of the system being studied, using some continuous material to mimic the variables being studied, such as electricity or water. Admittedly, once you go down to the quantum level, even electricity and water stop being continuous and turn out to be discrete, but analogue computers operate at a sufficiently large physical scale to allow us to ignore these effects (at the interface between computing and quantum effects, pretty much any statement about the subject is only partially correct, including this one).

A wonderful example of analogue computing is MONIAC (Monetary National Income Analogue Computer), built in 1949 by Bill Phillips. MONIAC was designed to model the economy of the United Kingdom, using coloured water, pipes and tanks to represent the flow and accumulation of capital throughout the national economy. The concept of MONIAC was parodied by Terry Pratchett in his book Making Money, in which a similar device known as the Glooper not only modelled, but in fact magically influenced the economy it was simulating. The advent of electronic amplifiers led to the creation of electronic analogue computers. By arranging the electronic analogue computer’s elements in certain fashion, the circuits would have differential equations identical to those we want to solve.

While not actually capable of controlling the systems they are meant to model, analogue computers do have benefits. They can solve systems of differential equations practically instantly, and can do so in ways that allow the processes taking place to be visualised and understood. However, there are a number of problems associated with analogue computing, of which two will be discussed. Repeatability of analogue computers is extremely sensitive to starting conditions. It is impossible to input exactly the same set of voltages into a set of circuits twice, so simulations tend to deviate over time. Also, if the same solution is never arrived at twice, it is impossible to know which one is best. The voltage from the electronic analogue computer, which represents the solution of the differential equation, cannot exceed the supply voltages that feed the circuit. This limits the largest voltage, and thus the largest number the analogue computer can achieve. The solution of differential equations may require large numbers.

Mixed Signal Computing: A Potential Solution?

Because of these problems, analogue computing has never been commonly used, and seems to have become side-lined to a small number of highly specific tasks. Since the explosion of desktop computing in the 1980s, digital computing has been increasingly relied upon as the preferred (although never entirely satisfactory) solution to solving sets of differential equations.

But all this could be changed, due to a new project undertaken by Drs Bryant and Fernández, and their colleagues at The University of Texas at Austin. They propose to build a computer with mixed analogue and digital components, effectively the best parts of both, to achieve a system that can solve large to very large systems of differential equations. Curiously, Drs Bryant and Fernández have found that the strengths of electronic analogue computers are the weaknesses of digital computers, and vice versa. If they can build this mixed computer, it will cause a significant leap in our ability to simulate large, complex systems in reasonable times.

What the team proposes is an integrated system of mixed-signal integrator cells connected to one another, enabling them to perform complex calculations. Mixed signal electronics combine analogue and digital electronic elements into hybrid circuits. An important part of how these integrator cells operate is that they can represent real numbers completely, rather than simply approximating them to a certain degree of accuracy. The design also allows any continuous function to be approximated, and performs integrations and other calculations without the small ‘steps’ in value that hamper current digital systems.

So how does this work? The integrated cells combine the binary summation of single small 0 and 1 values as carried out by digital computers, with the continuous flow of signal into and out of containers that represent these small binary values. Let us view the analogue integrator as a cup being filled with ‘signal’. If a ‘cup’ of signal fills up, instead of overflowing as more signal flows in, the full cup becomes a ‘1’ and a digital count of filled cups is increased by 1. Meanwhile the analogue cup resets to 0 or empties and then starts to fill again. Drs Bryant and Fernández named this cup concept an ‘analogue bit’. In this way, the problems of the analogue number limit and the digital number accuracy are solved simultaneously.

The connectivity and functionality of the hybrid integrator cells can be achieved using existing approaches and technologies. However, these novel hybrid components will make it possible for the circuitry to carry out processing simultaneously across the entire interconnected system, in much the same way as an analogue computer operates.

Another innovation of the team’s proposed system is that it would be programmable, enabling any system of differential equations to be represented without hard-wiring the system, as with original analogue computers. This means that it can behave in the same way as a digital computer in terms of being programmed, while also having the best qualities of an analogue system and the best qualities of a digital system. As Drs Bryant and Fernández mentioned, the strengths of analogue computers are the weaknesses of digital computers, and vice versa.

Testing the Design

To test this concept and compare it against existing supercomputing systems, Drs Bryant and Fernández created a benchmark problem consisting of one million coupled differential equations. The size of this problem is arguably greater than most systems that would be tackled in current situations and simulation scenarios.

They showed that in comparison to a supercomputer (ASCI Purple) costing hundreds of millions of dollars, their hybrid processing concept was thousands of times cheaper and smaller, while also being significantly faster. This performance comparison was made using modelling rather than a physical system, but was convincing nonetheless.

In addition to the benchmark problem solution, the research team created a printed circuit board (PCB) version of their concept, and used this to verify the design of the proposed system. This PCB was used to check the physical behaviour of the design and to ensure that it operated within expected parameters. In testing, the PCB demonstrated the physical and electronic robustness of the concept and suffered no data or system defects.

The physical size and power requirements of the proposed system are tiny compared to current parallel supercomputers, with the potential for a successful mixed-signal processor to be inserted as a processor on a laptop or handheld device. Such a device would rival current state of the art supercomputing systems in terms of their capability to simulate massive systems of differential equations.

A Hybrid Future

In the future, Dr Bryant and Dr Fernández want to develop their idea further by developing and testing a sequence of increasingly large, complex and sophisticated printed circuit boards containing integrator cells, and integrated circuits with hundreds or thousands of integrating cells. They also want to develop a system to control and program this through a computer. Future versions would have an in-built operating system allowing the circuitry to be programmed directly, with the circuit board integrated as an insert into a laptop or handheld device.

If successfully developed, such a device could be used to rapidly improve a large number of activities reliant on solving huge sets of differential equations. These include weather forecasts, maintenance diagnostics, virtual reality simulation and system design. Also, such a system could be used for neural network and AI-based applications, as neural networks are effectively large interconnected sets of nonlinear differential equations.

Meet the researchers

Dr Michael D Bryant

Department of Mechanical Engineering

Cockrell School of Engineering

The University of Texas at Austin

Austin, Texas

USA

After obtaining a BS in Bioengineering from the University of Illinois at Chicago in 1972, Dr Michael D. Bryant achieved a Masters in Mechanical Engineering at Northwestern University in 1980 and a PhD in Engineering Science and Applied Mathematics from the same university in 1981. He worked at North Carolina State University as an Assistant Professor from 1981 to 1985, and then as Associate Professor from 1985 to 1988. Since 1988 he has been a Professor at the University of Texas at Austin, and is currently Accenture Endowed Professor of Manufacturing Systems Engineering. His research focus includes mechatronics and tribology. Dr Bryant has over 100 published peer-reviewed publications, book chapters and conference papers, and several patents derived from his research. He is a Fellow of the American Society of Mechanical Engineers and is a member of the Institute of Electrical and Electronics Engineers and several other Societies.

CONTACT

T: (+1) 512 471 7840

E: mbryant@mail.utexas.edu

W: http://www.me.utexas.edu/~bryant/mdbryant.html

Dr Benito Fernandez

Department of Mechanical Engineering

Cockrell School of Engineering

The University of Texas at Austin

Austin, Texas

USA

Dr Benito R. Fernández began his career in Venezuela, where he studied Chemical Engineering in 1979 and Materials Engineering in 1981. In 1981, he moved to the US to carry out graduate research at MIT, where he received his MS in 1985 and PhD in 1988, both in Mechanical Engineering. Dr Fernández specialises in Applied Intelligence – in particular the use of different technologies to create intelligent devices and systems. He is also an expert in Nonlinear Robust Control and Mechatronics. His research focusses include manufacturing automation, industrial equipment diagnostics and prognosis, evolutionary robotics, hybrid Processors, eXtreme (resilient) devices, cyber-physical systems, smart energy systems and mechatronic design. Dr Fernández is the founder and Director of the NERDLab (Neuro-Engineering Research & Development Laboratory) and the LIME (Laboratory for Intelligent Manufacturing Engineering).

CONTACT

T: (+1) 512 471 7852

E: benito@mail.utexas.edu

W: http://www.me.utexas.edu/faculty/faculty-directory/fernandez

KEY COLLABORATORS

Luis Sentis (Aerospace), Glenn Masada (Mechanical), Eric Van Oort (Petroleum), Peter Stone (Computer Science), Asish Deshpande (Mechanical), The University of Texas at Austin

David Bukowitz, GTI Predictive

FUNDING

National Science Foundation