Working in Space: The Challenge for Mars and Beyond

Professor Karen Feigh and Dr Matthew Miller from the Georgia Institute of Technology examine what support will be required when astronauts need to work outside in deep space, where communication with Earth takes tens of minutes. Software engineer, Cameron Pittman, also joined the team to help develop functional prototypes so they can be tested in the lab and beyond.

Extravehicular Activity (or EVA) describes any work carried out by an astronaut outside a spacecraft, with common tasks including construction, inspecting payloads and making repairs. This work is carefully managed and controlled by NASA, and is one of the most prescriptive types of activity that astronauts carry out. Collectively, NASA astronauts have performed nearly 400 EVAs to date, over a quarter of which have encountered significant incidents such as crew injury, early termination or operational issues. Currently, astronauts and ground support personnel work closely together to ensure crew and vehicle safety, and where careful planning and execution minimises unnecessary risks.

Professor Karen Feigh and Dr Matthew Miller at the Georgia Institute of Technology wanted to gain a better understanding of how NASA manages EVAs, so that they could evaluate how the process might be improved for deep-space missions, such as manned missions to Mars where real-time communication with mission control on Earth is not possible. Of particular interest to them was the use of intelligent and automated ‘decision support’ tools to provide appropriate timely support to astronauts carrying out an EVA.

Understanding EVAs

To achieve their goals, Professor Feigh and Dr Miller needed to become familiar with the processes that NASA uses to control EVA, and the key roles played by individuals and groups both on the ground and in space – inside and outside the spacecraft.

The only way to achieve this was to spend time at NASA, gaining first-hand insight from experts and witnessing EVAs as they took place. As part of his doctoral studies funded by NASA’s own Space Technology Research Fellowship program, Dr Miller spent forty weeks over a four-year period working with teams at NASA’s Johnson Space Centre in Houston, Texas, where he developed close technical relationships with EVA operations experts.

To gain an even deeper understanding, Dr Miller was involved in three International Space Station EVAs, plus a simulation training exercise that amounted to over thirty hours of cumulative observation. To complement this, he also examined archive footage of historical EVAs, including that of the Apollo lunar surface operations, and comprehensively reviewed previously published research and development efforts regarding EVAs. The culmination of these efforts resulted in the modelling of the underlying constraints that shape EVA operations.

Early in the project, Dr Miller discovered that the EVA work environment was highly social, with effective communication between many different team members being a vital component. The EVA team involved experts located at different sites using many detailed work artefacts and information systems. He found that any given EVA was riddled with uncertainties, as controllers often needed to act as problem solvers despite the fact that the activities are carefully planned and executed.

Adding Rigour

Professor Feigh and Dr Miller not only needed a comprehensive and extensible understanding of how a present-day EVA is conducted, but they also needed to translate and reimagine EVAs in a future of deep-space missions. For this, the team leveraged perspectives from the discipline of ‘cognitive systems engineering’, which emphasises understanding the confluence of human operators and their associated technologies and work components that make up a complex system.

This scheme provided tools that allowed the team to examine, in a top-down and structured manner, the work performed, information flows and underlying constraints that shape EVA operations. Ultimately, it would help them derive the requirements necessary to influence their final goal: to create a prototype decision support system to improve the way that EVA operations would be performed during deep-space missions.

Important Findings

The team found that the EVA work environment is shaped by two major considerations: the distribution of knowledge across EVA operators and systems, and the harsh environments experienced by the space flight crew.

During an EVA, the extravehicular (EV) crew members are outside the spacecraft and are constrained by the spacesuit that keeps them alive, and the tools and robotic aids that help them accomplish their tasks. Because of these constraints and the heavy physical strain on the EV crew member, the intravehicular (IV) crew members – unsuited astronauts located inside the spacecraft – are positioned to help ease the burden of various aspects of EVA execution. Under present-day EVA operations at the International Space Station, an IV crew member controls the robotic assets to support EV tasks. The mission control centre (MCC) on Earth coordinates every part of the EVA, and is in constant communication with both the EV and IV crew.

Within the MCC, a large team of controllers is dedicated to EVA support, occupying three primary ‘console’ positions: systems, task and airlock. These three positions are supported by dozens of other team members called ‘back room’ controllers. Messages for both the EV and IV crew are condensed and transferred among the ground flight team and relayed through the ‘Ground IV’ – one of only two people allowed to communicate directly with the crew during EVA. As evidenced through this architecture, EVA operations relies on the wealth of technical, systems and operations knowledge that exists within MCC to ensure mission success.

As human exploration takes the crew farther from Earth, the communication latency between the MCC and the crew will grow to as much as 20 minutes for a one-way transmission to destinations such as Mars. As a result, MCC guidance will be inherently limited. Therefore, the only way to maintain successful EVA operations is to shift the work of the MCC to the space flight crew to deal with the moment by moment challenges that arise during EVAs.

The IV crew members are ideally situated to take on those additional responsibilities. The challenge now becomes effectively translating the work functions of many personnel in MCC to a single operator in a deep-space setting. One solution is to develop technology that can take on those additional responsibilities to provide the IV crew similar operational insight currently available at the MCC.

CREDIT: D.S.S. Lim

Decision Support System

Dr Miller and Professor Feigh’s comprehensive analysis of both current EVA operations and those envisaged for deep-space missions allowed them to derive the necessary systems requirements to guide the development of an EVA decision support system. Specifically, they developed a series of cognitive work and information relationship requirements that are unique to the development of systems designed to aid human cognition.

The team wanted to design a computer system that could assist the IV crew with managing high-priority tasks (previously assigned to MCC personnel): detailed life support system monitoring and managing EVA timeline progress. During EVAs, tracking and altering these aspects significantly impact safety and productivity. Other, less realised features of future EVA, such as future spacecraft, spacesuits, hardware, communication infrastructure would need to be considered in future work.

Therefore, Professor Feigh and Dr Miller decided to focus their efforts on the life support system and timeline management functions when developing their prototypes. To do this, they called on the skills of an experienced software engineer, Cameron Pittman.

Basic and Advanced Prototyping

Pittman gave his time freely to help develop the decision support system prototypes, of which there were two: Baseline and Advanced.

The team incorporated present-day serviceable EVA processes and technologies into their Baseline prototype, focusing on three main areas: life support system monitoring, timeline material and communication. A defining characteristic of this prototype is that it primarily supports the IV operator and envisions how the IV workstation might look if we were to take existing technologies to Mars tomorrow.

The Baseline prototype combined the various present-day EVA work artefacts for an IV operator to utilise. It included a summary of the timeline and detailed EVA procedures, which were provided in the form of paper timelines and schedules, with minimal alterations on current EVA practices. This prototype also supported two different types of communication – audio and text. A digital audio communication channel was provided between the EV and IV operators, and a simulated five-minute latency text communication client, on loan from the NASA Ames Playbook team, was included between the IV operator and the MCC.

CREDIT: Andrew Hara

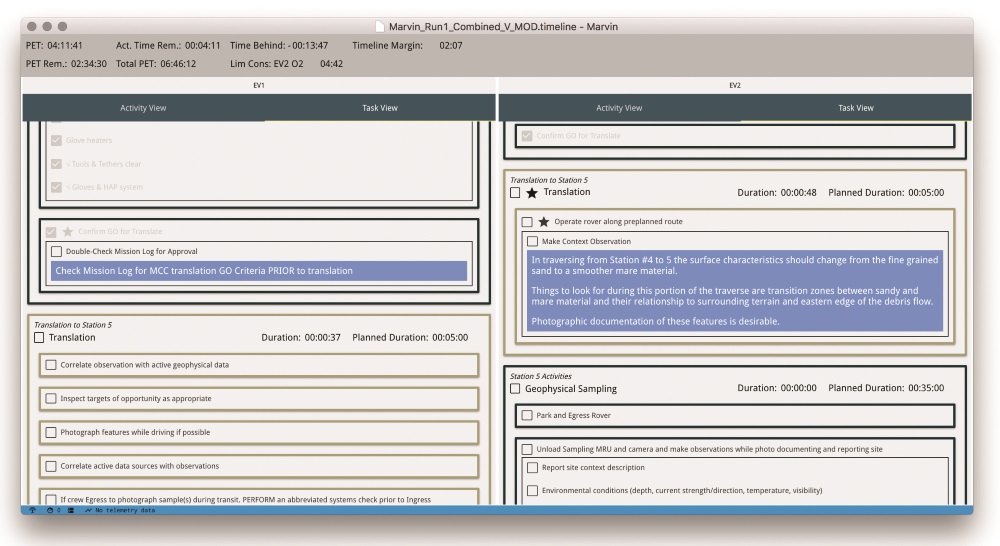

The team’s second prototype – the Advanced decision support prototype – demonstrated how new designs could be leveraged to support the IV operator’s new role in the EVA process. In particular, the team developed a novel timeline management tool that is integrated with life support monitoring, to create a system they called Marvin – a reference to the intelligent robot in Douglas Adam’s Hitchhiker’s Guide to the Galaxy. When designing this Advanced prototype, Pittman and Miller worked together to develop a design solution to managing tasks on the EVA timeline – a non-trivial task that represented a major shift in technology and practice.

Marvin consisted of three focus areas: life support monitoring, timeline task management and communication systems with information integration and automatic task progress calculations to reduce the burden on the IV crew.

The team describes their current design as a ‘dark cockpit’, where the display remains ‘dark’ or quiet unless an alert is triggered. Their Advanced prototype assumes that only audio communication would be used between the EV and IV crew, and that there would be time-delayed text communication between the MCC and the IV crew.

Proof of the Pudding

The team’s use of cognitive systems engineering helped frame two phases to test their Baseline and Advanced prototypes. The first involved three large-scale NASA analogue research programs that simulated future EVA operations and informed their second, more controlled, laboratory evaluation environment, where participants would test the prototype in a simulated laboratory experiment.

The Baseline prototype represents current EVA processes and technologies, and so allowed users to identify potential deficiencies in the design. This prototype was designed to adhere as closely as possible to today’s MCC. When managing the EVA tasks during the laboratory evaluation, 64% of the participants rated the Baseline as only ‘Slightly’ effective, indicating that there were substantial opportunities for improvement.

The team’s Advanced prototype, however, represents a first step towards improving support for the IV operator, particularly when managing the timeline of the tasks that need to be carried out while also monitoring a wealth of life support system data. The participants rated this prototype as ‘very’ or ‘extremely’ effective in a number of key areas regarding timeline and life support system management. It was clear to the team that this was just a start, and significant improvements to data displays would be possible in future designs.

Participants in the laboratory tests were easily able to use the new timeline management features to track the progress of EV crew. They appreciated the ability to ‘click-off’ steps as they were completed, which produced accurate moment-to-moment estimates of timeline margin and progress. As a result, the IV operator could focus on communicating with the crew without the need to perform mental timeline math calculations. Unsurprisingly, they commented that this new way of working was much better than having to use paper-based tools.

Nearly all measures of user performance yielded significant differences between the prototype designs, in favour of the team’s Advanced decision support system.

The team recognises that trying to improve EVA operations is a massive undertaking that will require a huge investment of effort. Their work has shown that incremental improvements are possible and with the appropriate articulation of meaningful requirements that guide technology development, we will be able to meet the challenges facing future space explorers as they venture deeper into our Solar System.

Meet the researchers

Professor Karen Feigh

School of Aerospace Engineering

Georgia Institute of Technology

Atlanta, GA

USA

Professor Karen Feigh was awarded her PhD in Industrial and Systems Engineering from Georgia Institute of Technology in 2008. Subsequently, she became an Assistant Professor at the Institute in the School of Aerospace Engineering, where she is now an Associate Professor. She is currently Principle Investigator on a number of important contracts, including Proactive Decision Support Through Information Modification for the US Office of Naval Research, and Objective Function Allocation Method for Human-Automation/Robotic Interaction and Technologies for Disruption Management in Mixed Initiative Schedule for Human Space Flight for NASA.

CONTACT

E: karen.feigh@gatech.edu

T: (+1) 404 385 7686

W: http://www.cec.gatech.edu/

Matthew J. Miller, PhD

JACOBS/JETS | Advanced Exploration Group

NASA Johnson Space Center

XI3 | Astromaterials Research and Exploration Science

Houston, TX

USA

Matthew J. Miller received his PhD in Aerospace Engineering from Georgia Institute of Technology, Atlanta, GA in 2017. He is currently an Exploration Research Engineer at Jacobs Engineering, Inc. at the NASA Johnson Space Center, researching advanced state of the art human spaceflight extravehicular activity flight operations by leveraging cognitive systems engineering practices. Dr Miller has participated in three separate NASA analogue research programs, accumulating over 175+ hours of simulated future EVA operations experience. He served as an EVA Team Member and Flight Controller, where he contributed to the development and execution of mission objectives, research design, and EVA timelines.

CONTACT

E: matthew.j.miller-1@nasa.gov

Cameron Pittman

Udacity Inc

Chicago, IL

USA

Cameron W Pittman is an experienced Software Engineer who graduated with a MA in Teaching from Belmont University in 2011 and a BA in physics from Vanderbilt University in 2009. He is currently employed by Udacity as Full Stack Software Engineer, where he focuses on helping students get feedback on their projects. Amongst other projects, he augments and scales tools that analyse student projects and provide feedback automatically. Cameron also maintains and improves Udacity’s infrastructure and services that connect students with graders.

CONTACT

E: cameron.w.pittman@gmail.com

W: https://hurtlingthrough.space

W: twitter.com/cwpittman

FUNDING

NASA